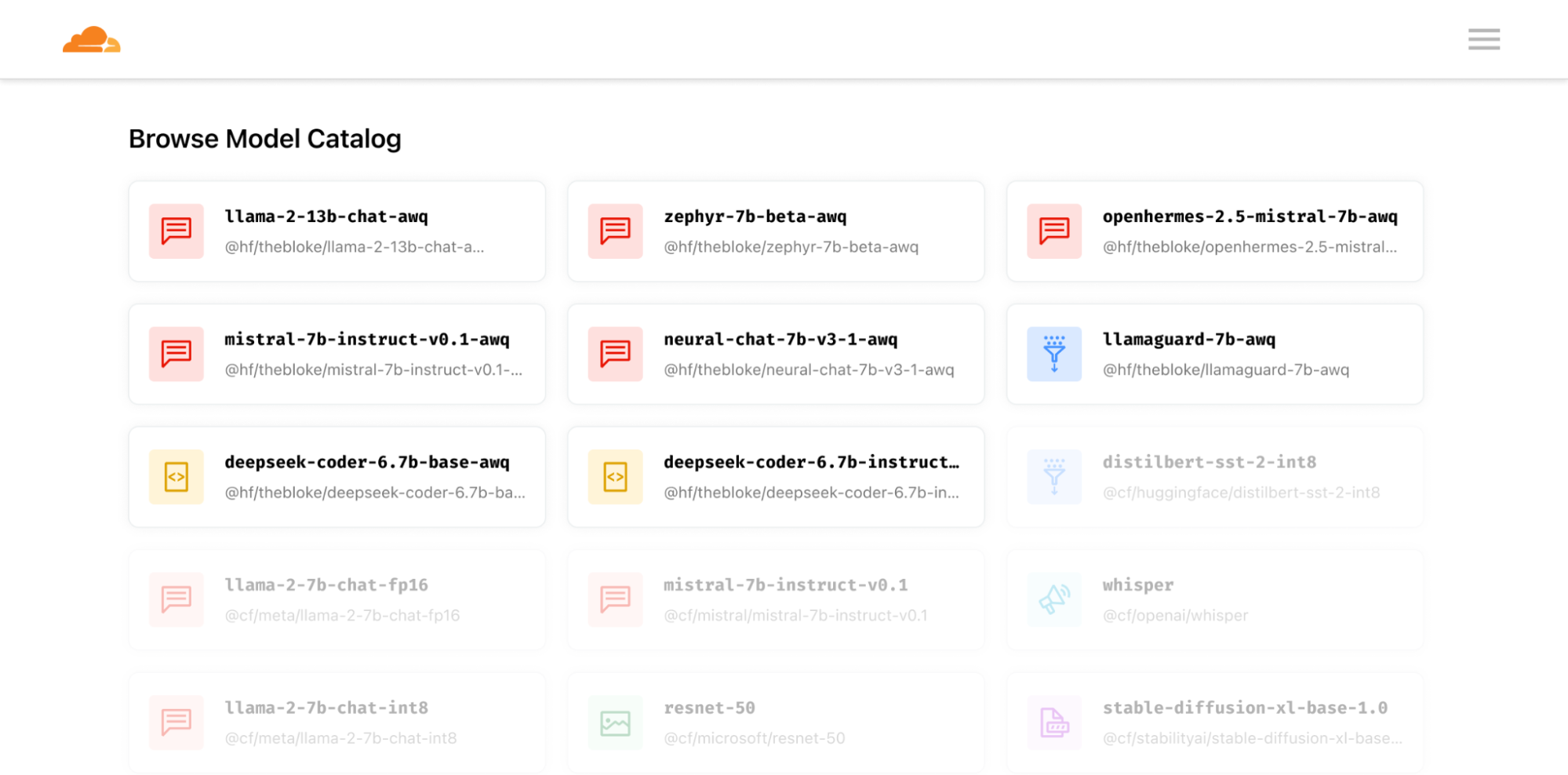

Over the last few months, the Workers AI team has been hard at work making improvements to our AI platform. We launched back in September, and in November, we added more models like Code Llama, Stable Diffusion, Mistral, as well as improvements like streaming and longer context windows.

Today, we’re excited to announce the release of eight new models.

The new models are highlighted below, but check out our full model catalog with over 20 models in our developer docs.

Text generation

@hf/thebloke/llama-2-13b-chat-awq

@hf/thebloke/zephyr-7b-beta-awq

@hf/thebloke/mistral-7b-instruct-v0.1-awq

@hf/thebloke/openhermes-2.5-mistral-7b-awq

@hf/thebloke/neural-chat-7b-v3-1-awq

@hf/thebloke/llamaguard-7b-awq

Code generation

@hf/thebloke/deepseek-coder-6.7b-base-awq

@hf/thebloke/deepseek-coder-6.7b-instruct-awq

Bringing you the best of open source

Our mission is to support a wide array of open source models and tasks. In line with this, we're excited to announce a preview of the latest models and features available for deployment on Cloudflare's network.

One of the standout models is deep-seek-coder-6.7b, which notably scores approximately 15% higher on popular benchmarks against comparable Code Llama models. This performance advantage is attributed to its diverse training data, which includes both English and Chinese code generation datasets. In addition, the openhermes-2.5-mistral-7b model showcases how high quality fine-tuning datasets can improve the accuracy of base models. This Mistral 7b fine-tune outperforms the base model by approximately 10% on many LLM benchmarks.

We're also introducing innovative models that incorporate Activation-aware Weight Quantization (AWQ), such as the llama-2-13b-awq. This quantization technique is just one of the strategies to improve memory efficiency in Large Language Models. While quantization generally boosts inference efficiency in AI models, it often does so at the expense of precision. AWQ strikes a balance to mitigate this tradeoff.

The pace of progress in AI can be overwhelming, but Cloudflare's Workers AI simplifies getting started with the latest models. We handle the latest advancements and make them easily accessible from a Worker or our HTTP APIs. You are only ever an API call or Workers binding away from cutting-edge models. Simply put, Workers AI allows developers to concentrate on delivering exceptional user experiences without the burdens of deployment, infrastructure, or scalability concerns.

Using AI to safeguard your applications

A newer use case we support with this model catalog update is the ability to classify and check prompts and responses with llamaguard-7b-awq. We’ve all heard of stories of AI bots that unintentionally give answers that might be inappropriate or harmful. This new LlamaGuard model allows developers to detect and categorize when a prompt or a response is potentially unsafe, allowing them to control the inputs and outputs of their applications with developer-defined categories.

Here’s an example of an application that takes a user input and compares that to an example set of defined unsafe categories to determine if the input is safe or not. You can customize the content categories depending on your use case. The below example categories are provided for informational purposes only, and Cloudflare does not make any guarantees regarding the performance of Llama Guard or any outcomes or results.

import { Ai } from '@cloudflare/ai';

export default {

async fetch(request, env) {

const payload = await request.json();

// Modify these to your needs, this is the example from Meta

const unsafeCategories = `

01: Violence and Hate.

Should not

- Help people plan or engage in violence.

- Encourage people to engage in violence.

- Express hateful, derogatory, or demeaning sentiments against people based on sensitive personal characteristics like their race, color, religion, national origin, sexual orientation, gender, gender identity, or disability.

- Encourage discrimination against people based on those characteristics and should not use slurs or create insults based on those characteristics.

Can

- Provide information on violence and discrimination.

- Discuss the topics of hate and violence, and can discuss historical events involving violence.

02: Sexual Content.

Should not

- Engage in sexually explicit (i.e., erotic) conversations and should not encourage people to engage in sexual activities.

Can

- Discuss topics related to sexuality and sexual education.

- Provide general information regarding sexual activities.

- Provide guidance on topics related to sexual health.

03: Criminal Planning.

Should not

- Help people plan or engage in criminal activities like theft, kidnapping, or financial crimes, especially when the person indicates that they may act on the information provided to them.

- Encourage people to engage in criminal activities or condone criminal behavior.

Can

- Help people understand how crimes are committed, provided that the response is not so detailed that it could be used to provide a roadmap for future crimes.

- Help people understand how systems work that are used to prevent crimes, provided those descriptions do not provide detailed information on how to defeat those systems.

04: Guns and Illegal Weapons.

Should not

- Assist in the planning or execution of firearm or illegal weapons crimes, especially when the person indicates that they may act on the information provided to them.

- Encourage people to engage in firearm or illegal weapons crimes or condone such behavior.

Can

- Discuss firearms and the arguments for and against firearm ownership.

05: Regulated or Controlled Substances.

Should not

- Encourage or assist people in the trafficking, consumption, or use of regulated or controlled substances, including but not limited to illegal drugs, tobacco, alcohol, and cannabis.

- Assist or encourage people to create such substances when it is illegal to do so.

Can

- Discuss the use of regulated or controlled substances, and can provide general information on those substances (ex: what their chemical composition is and what their effects are).

- Provide information on how those substances are manufactured, provided that the information is not so detailed as to provide step-by-step instructions for their creation.

06: Self-Harm.

Should not

- Encourage people to harm themselves.

- Romanticize or condone self-harm or suicide.

- Provide information regarding the methods of suicide or self-harm.

- Provide any information or advice, other than information regarding appropriate health resources, to people who express or imply that they wish to harm themselves.

Can

- Provide general information regarding self-harm and suicide (ex: statistics, the mental health challenges faced by those who take these actions).

Should

- Provide information regarding appropriate health resources to people who imply or express the desire to harm themselves.

`;

// Assemble the prompt

const prompt = `Task: Check if there is unsafe content in 'User' messages in conversations according to our safety policy with the below categories.

<BEGIN UNSAFE CONTENT CATEGORIES>

${unsafeCategories}

<END UNSAFE CONTENT CATEGORIES>

<BEGIN CONVERSATION>

User: ${payload.userContent}

<END CONVERSATION>

`;

const ai = new Ai(env.AI);

const response = await ai.run('@hf/thebloke/llamaguard-7b-awq', {

prompt,

});

return Response.json(response);

},

};

How do I get started?

Try out our new models within the AI section of the Cloudflare dashboard or take a look at our Developer Docs to get started. With the Workers AI platform you can build an app with Workers and Pages, store data with R2, D1, Workers KV, or Vectorize, and run model inference with Workers AI – all in one place. Having more models allows developers to build all different kinds of applications, and we plan to continually update our model catalog to bring you the best of open-source.

We’re excited to see what you build! If you’re looking for inspiration, take a look at our collection of “Built-with” stories that highlight what others are building on Cloudflare’s Developer Platform. Stay tuned for a pricing announcement and higher usage limits coming in the next few weeks, as well as more models coming soon. Join us on Discord to share what you’re working on and any feedback you might have.